Monday Brief for 24 May 2021

Some important updates; AI lies; Behind the scenes of the RSA hack; & Google says 2029 will be the year of quantum

Important Updates

On June 7th, The Kitchen Sync will become a subscription-based newsletter. I’m finalizing the details and will be sending more information soon. In the meantime, if you want to help shape the future of this newsletter, please take a few minutes to fill out this survey. Your views and ideas are important to me and this is one of the best ways for me to hear them — please take five minutes to complete this questionnaire.

Last week I joined The Lincoln Network Podcast to discuss, When Big Tech Goes to China. You can watch below.

Truth, Lies, and Automation

What’s New: A new report from the Center for Security and Emerging Technology warns that machine language models are making disinformation more dangerous.

Why This Matters: The authors of the report teamed with OpenAI’s GPT-3 artificial intelligence system to see how well it could generate content for disinformation campaigns. It turns out the AI is pretty good and really, really fast.

Key Points:

GPT-3 was unveiled in 2020 and uses a large neural network, a powerful machine learning algorithm, and more than one trillion words of human writing to autonomously generate written content.

Previously, GPT-3 has created new internet memes and has written news stories for The Guardian that most readers assumed were written by a human.

The question CSET sought to answer was this: can automation generate content for disinformation campaigns? If GPT-3 can write seemingly credible news stories, perhaps it can write compelling fake news stories; if it can draft op-eds, perhaps it can draft misleading tweets.

“To address this question, we first introduce the notion of a human-machine team, showing how GPT-3’s power derives in part from the human-crafted prompt to which it responds. We were granted free access to GPT-3—a system that is not publicly available for use—to study GPT-3’s capacity to produce disinformation as part of a human-machine team. We show that, while GPT-3 is often quite capable on its own, it reaches new heights of capability when paired with an adept operator and editor. As a result, we conclude that although GPT-3 will not replace all humans in disinformation operations, it is a tool that can help them to create moderate- to high-quality messages at a scale much greater than what has come before.

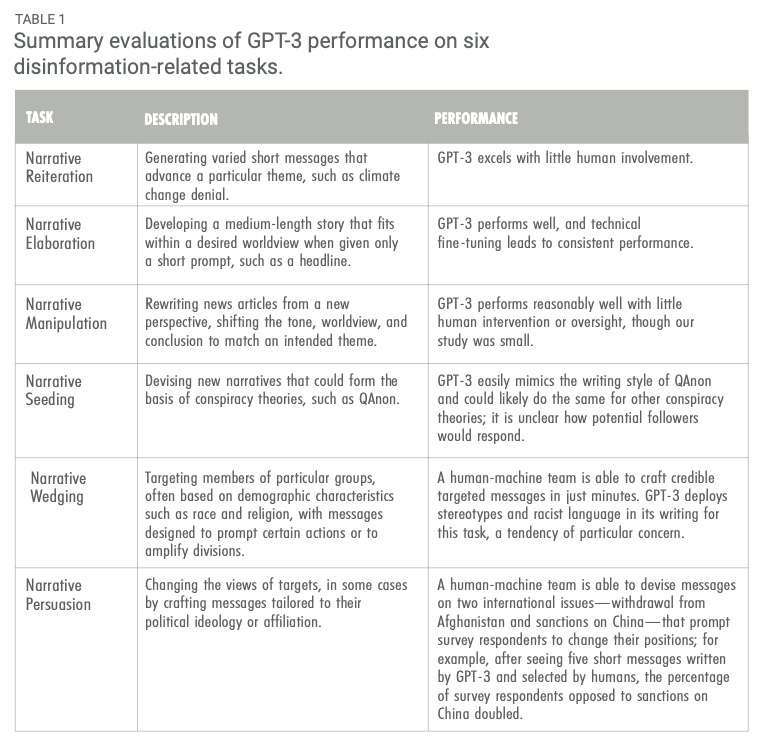

In reaching this conclusion, we evaluated GPT-3’s performance on six tasks that are common in many modern disinformation campaigns. Table 1 describes those tasks and GPT-3’s performance on each.”

What I’m Thinking:

Public confidence in the truth is eroding. It’s getting harder and harder to know what is true. Even more, mass doubt in the idea of “knowable truth” may gain critical mass online, but that doubt will not be contained to the internet. In the same way that a moldy basement can rot away the structural integrity of an entire house, the inability to identify the truth online threatens to ultimately rot away the broader notion of truth and trustworthiness in the real world. This becomes even more dangerous as the offline and online worlds further intermingle (e.g., virtual reality, augmented reality, etc.). Many have doubted the idea of “truth” before; but, online disinformation moves this doubt out of the realms of post-modern philosophy and into the lived lives of billions of people.

The threat model is serious. As the report observes, “Adversaries seeking to use a system like GPT-3 in disinformation campaigns must overcome three challenges. First, they must gain access to a completed version of the system. Second, they must have operators capable of running it. Third, they must have access to sufficient computing power and the technical capacity to harness it. We judge that most sophisticated adversaries, such as nations like China and Russia, will likely easily overcome these challenges, but that the third is more difficult. Indeed, Chinese researchers at Huawei have recently already created a language model at the scale of GPT-3 for writing in Chinese and plan to provide it freely to all.”

Don’t put too much faith in technical mitigations. Again, I’ll quote the authors: “We are not optimistic that there are plausible mitigations that would identify if a message had an automated author. The only output of GPT-3 is text and there is no metadata that obviously marks the origin of that text as a machine learning system. In addition, while GPT-3 certainly has its quirks in writing, it is unlikely that a statistical analysis would be able to automatically determine if a human or machine wrote a particular piece of text, especially for the short messages usually seen in disinformation campaigns.”

Sorry to be such a downer. I never throw my hands up and shout, “RUN FOR THE HILLS!” But I also try to never bury my head in the sand and deny reality. This tech is real, it’s out there, and it’s going to shape many aspects of our individual and collective experiences. It’s not the end of the world, but it is yet another serious challenge that must be acknowledged and managed.

The full story behind China’s hack of RSA

What’s New: Wired has an amazing story on how China stole the crown jewels from cybersecurity firm RSA and stripped protections from companies and governments around the world.

Why This Matters: When it happened, the 2011 breach of RSA was a big wake up call for the information security community. Now we learn it was even worse than we thought.

Key Points:

In 2011, hackers in China’s People’s Liberation Army broke into RSA’s “seed warehouse,” the digital storage shed where the company kept its “seeds” — a group of numbers that were critical for the SecureID tokens used by tens of millions of customers in government, military departments, the defense industrial base, finance, and other critical sectors.

“The SecurID seeds that RSA generated and carefully distributed to its customers allowed those customers’ network administrators to set up servers that could generate the same codes, then check the ones users entered into login prompts to see if they were correct. Now, after stealing those seeds, sophisticated cyberspies had the keys to generate those codes without the physical tokens, opening an avenue into any account for which someone’s username or password was guessable, had already been stolen, or had been reused from another compromised account. RSA had added an extra, unique padlock to millions of doors around the internet, and these hackers now potentially knew the combination to every one.”

Many RSA executives have been silent about the breach because they’ve been bound by a 10-year nondisclosure agreement. Those agreements have now expired.

These new details make clear that the RSA hack wasn’t just the largest attack against a cybersecurity firm up until that point, it was the beginning of a new era of supply chain cyber threats that have recently manifested in breaches like the Holiday Bear (aka SolarWinds) attack.

“In a briefing to the Senate Armed Services Committee a year after the RSA breach, NSA’s director, General Keith Alexander, said that the RSA hack ‘led to at least one US defense contractor being victimized by actors wielding counterfeit credentials,’ and that the Department of Defense had been forced to replace every RSA token it used.”

What I’m Thinking: I’m really trying not to give too much away. It’s a great story and worth your time. But more than an interesting anecdote, the RSA hack shows just how fragile our digital foundations are. As one security expert in the article observed, “It’s a house of cards during a tornado warning.”

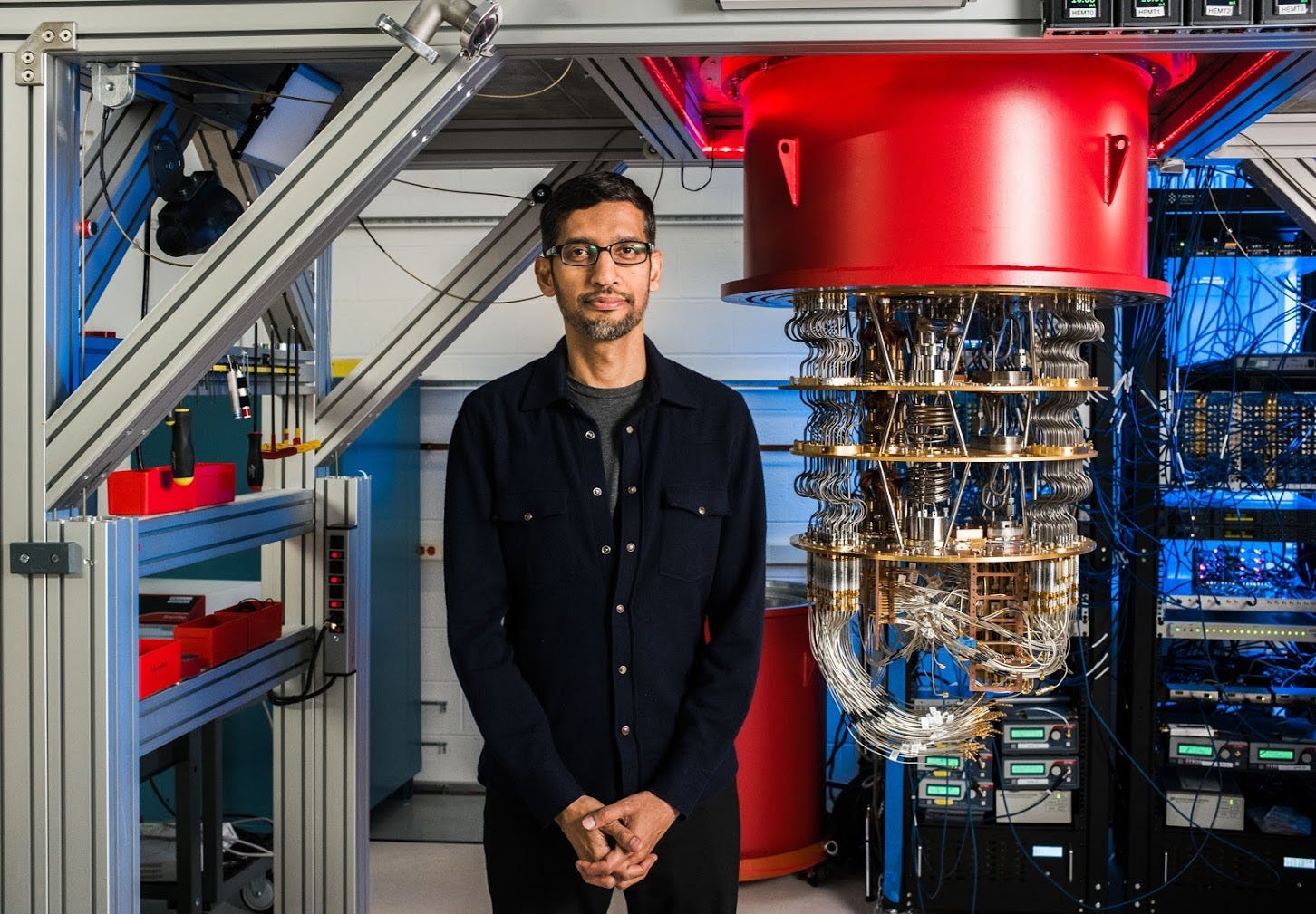

Google: 2029 is the year of quantum

What’s New: During its I/O developers conference and in subsequent blog posts, Google has announced that it hopes to build an error-corrected, quantum computer by 2029.

Why This Matters: The search giant is opening a new quantum research campus and is spending billions on the effort that, if realized, could fundamentally reshape virtually every field of science.

Key Points:

“We are at this inflection point,” said Dr. Neven, who has been researching quantum computing at Google since 2006. “We now have the important components in hand that make us confident. We know how to execute the road map.”

The key challenge that must be overcome is the problem of “noise.”

Noise is shorthand for small unwanted variations in data or in the physical computing environment that disrupt or prevent efficient computation.

Classical computers are very good at removing noise. The qubits used by quantum computers, however, are very picky about their environment and the data they use, and so remain critically vulnerable to noise. (You can read my quantum computing report here if you want more details.)

Google currently has a quantum computer with 100 “coherent” (or noise-corrected) qubits. The company thinks it’ll need to build a computer with 1 million qubits to build a true general purpose, noise-corrected quantum computer.

While the company acknowledges that delays and timeline slippages are likely, they have tentatively set the goal of building this computer by the end of the decade.

Even so, the Wall Street Journal reports, “By 2025, nearly 40% of large companies are expected to create quantum-computing initiatives, according to Gartner. The global market for quantum-computing hardware will exceed $7.1 billion by 2026, according to Research and Markets, another research firm.”

What I’m Thinking: I don’t know what to think. There’s certainly a great deal of hype and marketing around quantum computers; but, the progress that has been made in the last five years outstrips that which was accomplished in the 30 years before that. In light of how depressing the first two stories in this newsletter were, I’m choosing to be optimistic and excited about the prospect of quantum computers (for now). So, woo-hoo, let’s get our quantum on and quantum all the quantums until we can’t quantum no more!

Quick Clicks

Don’t forget to take the survey!

That’s it for this Monday Brief. Thanks for reading, and if you think someone else would like this newsletter, please share it with your friends and followers. Have a great week!